This article needs additional citations for verification. (April 2015) |

The following tables list the computational complexity of various algorithms for common mathematical operations.

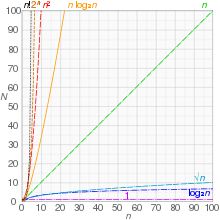

Here, complexity refers to the time complexity of performing computations on a multitape Turing machine.[1] See big O notation for an explanation of the notation used.

Note: Due to the variety of multiplication algorithms, below stands in for the complexity of the chosen multiplication algorithm.

Arithmetic functions edit

This table lists the complexity of mathematical operations on integers.

| Operation | Input | Output | Algorithm | Complexity |

|---|---|---|---|---|

| Addition | Two -digit numbers | One -digit number | Schoolbook addition with carry | |

| Subtraction | Two -digit numbers | One -digit number | Schoolbook subtraction with borrow | |

| Multiplication | Two -digit numbers |

One -digit number | Schoolbook long multiplication | |

| Karatsuba algorithm | ||||

| 3-way Toom–Cook multiplication | ||||

| -way Toom–Cook multiplication | ||||

| Mixed-level Toom–Cook (Knuth 4.3.3-T)[2] | ||||

| Schönhage–Strassen algorithm | ||||

| Harvey-Hoeven algorithm[3][4] | ||||

| Division | Two -digit numbers | One -digit number | Schoolbook long division | |

| Burnikel–Ziegler Divide-and-Conquer Division[5] | ||||

| Newton–Raphson division | ||||

| Square root | One -digit number | One -digit number | Newton's method | |

| Modular exponentiation | Two -digit integers and a -bit exponent | One -digit integer | Repeated multiplication and reduction | |

| Exponentiation by squaring | ||||

| Exponentiation with Montgomery reduction |

On stronger computational models, specifically a pointer machine and consequently also a unit-cost random-access machine it is possible to multiply two n-bit numbers in time O(n).[6]

Algebraic functions edit

Here we consider operations over polynomials and n denotes their degree; for the coefficients we use a unit-cost model, ignoring the number of bits in a number. In practice this means that we assume them to be machine integers.

| Operation | Input | Output | Algorithm | Complexity |

|---|---|---|---|---|

| Polynomial evaluation | One polynomial of degree with integer coefficients | One number | Direct evaluation | |

| Horner's method | ||||

| Polynomial gcd (over or ) | Two polynomials of degree with integer coefficients | One polynomial of degree at most | Euclidean algorithm | |

| Fast Euclidean algorithm (Lehmer)[citation needed] |

Special functions edit

Many of the methods in this section are given in Borwein & Borwein.[7]

Elementary functions edit

The elementary functions are constructed by composing arithmetic operations, the exponential function ( ), the natural logarithm ( ), trigonometric functions ( ), and their inverses. The complexity of an elementary function is equivalent to that of its inverse, since all elementary functions are analytic and hence invertible by means of Newton's method. In particular, if either or in the complex domain can be computed with some complexity, then that complexity is attainable for all other elementary functions.

Below, the size refers to the number of digits of precision at which the function is to be evaluated.

| Algorithm | Applicability | Complexity |

|---|---|---|

| Taylor series; repeated argument reduction (e.g. ) and direct summation | ||

| Taylor series; FFT-based acceleration | ||

| Taylor series; binary splitting + bit-burst algorithm[8] | ||

| Arithmetic–geometric mean iteration[9] |

It is not known whether is the optimal complexity for elementary functions. The best known lower bound is the trivial bound .

Non-elementary functions edit

| Function | Input | Algorithm | Complexity |

|---|---|---|---|

| Gamma function | -digit number | Series approximation of the incomplete gamma function | |

| Fixed rational number | Hypergeometric series | ||

| , for integer. | Arithmetic-geometric mean iteration | ||

| Hypergeometric function | -digit number | (As described in Borwein & Borwein) | |

| Fixed rational number | Hypergeometric series |

Mathematical constants edit

This table gives the complexity of computing approximations to the given constants to correct digits.

| Constant | Algorithm | Complexity |

|---|---|---|

| Golden ratio, | Newton's method | |

| Square root of 2, | Newton's method | |

| Euler's number, | Binary splitting of the Taylor series for the exponential function | |

| Newton inversion of the natural logarithm | ||

| Pi, | Binary splitting of the arctan series in Machin's formula | [10] |

| Gauss–Legendre algorithm | [10] | |

| Euler's constant, | Sweeney's method (approximation in terms of the exponential integral) |

Number theory edit

Algorithms for number theoretical calculations are studied in computational number theory.

| Operation | Input | Output | Algorithm | Complexity |

|---|---|---|---|---|

| Greatest common divisor | Two -digit integers | One integer with at most digits | Euclidean algorithm | |

| Binary GCD algorithm | ||||

| Left/right k-ary binary GCD algorithm[11] | ||||

| Stehlé–Zimmermann algorithm[12] | ||||

| Schönhage controlled Euclidean descent algorithm[13] | ||||

| Jacobi symbol | Two -digit integers | , or | Schönhage controlled Euclidean descent algorithm[14] | |

| Stehlé–Zimmermann algorithm[15] | ||||

| Factorial | A positive integer less than | One -digit integer | Bottom-up multiplication | |

| Binary splitting | ||||

| Exponentiation of the prime factors of | ,[16] [1] | |||

| Primality test | A -digit integer | True or false | AKS primality test | [17][18] , assuming Agrawal's conjecture |

| Elliptic curve primality proving | heuristically[19] | |||

| Baillie–PSW primality test | [20][21] | |||

| Miller–Rabin primality test | [22] | |||

| Solovay–Strassen primality test | [22] | |||

| Integer factorization | A -bit input integer | A set of factors | General number field sieve | [nb 1] |

| Shor's algorithm | , on a quantum computer |

Matrix algebra edit

The following complexity figures assume that arithmetic with individual elements has complexity O(1), as is the case with fixed-precision floating-point arithmetic or operations on a finite field.

| Operation | Input | Output | Algorithm | Complexity |

|---|---|---|---|---|

| Matrix multiplication | Two matrices | One matrix | Schoolbook matrix multiplication | |

| Strassen algorithm | ||||

| Coppersmith–Winograd algorithm (galactic algorithm) | ||||

| Optimized CW-like algorithms[23][24][25][26] (galactic algorithms) | ||||

| Matrix multiplication | One matrix, and one matrix |

One matrix | Schoolbook matrix multiplication | |

| Matrix multiplication | One matrix, and one matrix, for some |

One matrix | Algorithms given in [27] | , where upper bounds on are given in [27] |

| Matrix inversion | One matrix | One matrix | Gauss–Jordan elimination | |

| Strassen algorithm | ||||

| Coppersmith–Winograd algorithm | ||||

| Optimized CW-like algorithms | ||||

| Singular value decomposition | One matrix | One matrix, one matrix, & one matrix |

Bidiagonalization and QR algorithm | ( ) |

| One matrix, one matrix, & one matrix |

Bidiagonalization and QR algorithm | ( ) | ||

| QR decomposition | One matrix | One matrix, & one matrix |

Algorithms in [28] | ( ) |

| Determinant | One matrix | One number | Laplace expansion | |

| Division-free algorithm[29] | ||||

| LU decomposition | ||||

| Bareiss algorithm | ||||

| Fast matrix multiplication[30] | ||||

| Back substitution | Triangular matrix | solutions | Back substitution[31] |

In 2005, Henry Cohn, Robert Kleinberg, Balázs Szegedy, and Chris Umans showed that either of two different conjectures would imply that the exponent of matrix multiplication is 2.[32]

Transforms edit

Algorithms for computing transforms of functions (particularly integral transforms) are widely used in all areas of mathematics, particularly analysis and signal processing.

| Operation | Input | Output | Algorithm | Complexity |

|---|---|---|---|---|

| Discrete Fourier transform | Finite data sequence of size | Set of complex numbers | Schoolbook | |

| Fast Fourier transform |

Notes edit

- ^ This form of sub-exponential time is valid for all . A more precise form of the complexity can be given as

References edit

- ^ a b Schönhage, A.; Grotefeld, A.F.W.; Vetter, E. (1994). Fast Algorithms—A Multitape Turing Machine Implementation. BI Wissenschafts-Verlag. ISBN 978-3-411-16891-0. OCLC 897602049.

- ^ Knuth 1997

- ^ Harvey, D.; Van Der Hoeven, J. (2021). "Integer multiplication in time O (n log n)" (PDF). Annals of Mathematics. 193 (2): 563–617. doi:10.4007/annals.2021.193.2.4. S2CID 109934776.

- ^ Klarreich, Erica (December 2019). "Multiplication hits the speed limit". Commun. ACM. 63 (1): 11–13. doi:10.1145/3371387. S2CID 209450552.

- ^ Burnikel, Christoph; Ziegler, Joachim (1998). Fast Recursive Division. Forschungsberichte des Max-Planck-Instituts für Informatik. Saarbrücken: MPI Informatik Bibliothek & Dokumentation. OCLC 246319574. MPII-98-1-022.

- ^ Schönhage, Arnold (1980). "Storage Modification Machines". SIAM Journal on Computing. 9 (3): 490–508. doi:10.1137/0209036.

- ^ Borwein, J.; Borwein, P. (1987). Pi and the AGM: A Study in Analytic Number Theory and Computational Complexity. Wiley. ISBN 978-0-471-83138-9. OCLC 755165897.

- ^ Chudnovsky, David; Chudnovsky, Gregory (1988). "Approximations and complex multiplication according to Ramanujan". Ramanujan revisited: Proceedings of the Centenary Conference. Academic Press. pp. 375–472. ISBN 978-0-01-205856-5.

- ^ Brent, Richard P. (2014) [1975]. "Multiple-precision zero-finding methods and the complexity of elementary function evaluation". In Traub, J.F. (ed.). Analytic Computational Complexity. Elsevier. pp. 151–176. arXiv:1004.3412. ISBN 978-1-4832-5789-1.

- ^ a b Richard P. Brent (2020), The Borwein Brothers, Pi and the AGM, Springer Proceedings in Mathematics & Statistics, vol. 313, arXiv:1802.07558, doi:10.1007/978-3-030-36568-4, ISBN 978-3-030-36567-7, S2CID 214742997

- ^ Sorenson, J. (1994). "Two Fast GCD Algorithms". Journal of Algorithms. 16 (1): 110–144. doi:10.1006/jagm.1994.1006.

- ^ Crandall, R.; Pomerance, C. (2005). "Algorithm 9.4.7 (Stehlé-Zimmerman binary-recursive-gcd)". Prime Numbers – A Computational Perspective (2nd ed.). Springer. pp. 471–3. ISBN 978-0-387-28979-3.

- ^ Möller N (2008). "On Schönhage's algorithm and subquadratic integer gcd computation" (PDF). Mathematics of Computation. 77 (261): 589–607. Bibcode:2008MaCom..77..589M. doi:10.1090/S0025-5718-07-02017-0.

- ^ Bernstein, D.J. "Faster Algorithms to Find Non-squares Modulo Worst-case Integers".

- ^ Brent, Richard P.; Zimmermann, Paul (2010). "An algorithm for the Jacobi symbol". International Algorithmic Number Theory Symposium. Springer. pp. 83–95. arXiv:1004.2091. doi:10.1007/978-3-642-14518-6_10. ISBN 978-3-642-14518-6. S2CID 7632655.

- ^ Borwein, P. (1985). "On the complexity of calculating factorials". Journal of Algorithms. 6 (3): 376–380. doi:10.1016/0196-6774(85)90006-9.

- ^ Lenstra jr., H.W.; Pomerance, Carl (2019). "Primality testing with Gaussian periods" (PDF). Journal of the European Mathematical Society. 21 (4): 1229–69. doi:10.4171/JEMS/861. hdl:21.11116/0000-0005-717D-0.

- ^ Tao, Terence (2010). "1.11 The AKS primality test". An epsilon of room, II: Pages from year three of a mathematical blog. Graduate Studies in Mathematics. Vol. 117. American Mathematical Society. pp. 82–86. doi:10.1090/gsm/117. ISBN 978-0-8218-5280-4. MR 2780010.

- ^ Morain, F. (2007). "Implementing the asymptotically fast version of the elliptic curve primality proving algorithm". Mathematics of Computation. 76 (257): 493–505. arXiv:math/0502097. Bibcode:2007MaCom..76..493M. doi:10.1090/S0025-5718-06-01890-4. MR 2261033. S2CID 133193.

- ^ Pomerance, Carl; Selfridge, John L.; Wagstaff, Jr., Samuel S. (July 1980). "The pseudoprimes to 25·109" (PDF). Mathematics of Computation. 35 (151): 1003–26. doi:10.1090/S0025-5718-1980-0572872-7. JSTOR 2006210.

- ^ Baillie, Robert; Wagstaff, Jr., Samuel S. (October 1980). "Lucas Pseudoprimes" (PDF). Mathematics of Computation. 35 (152): 1391–1417. doi:10.1090/S0025-5718-1980-0583518-6. JSTOR 2006406. MR 0583518.

- ^ a b Monier, Louis (1980). "Evaluation and comparison of two efficient probabilistic primality testing algorithms". Theoretical Computer Science. 12 (1): 97–108. doi:10.1016/0304-3975(80)90007-9. MR 0582244.

- ^ Alman, Josh; Williams, Virginia Vassilevska (2020), "A Refined Laser Method and Faster Matrix Multiplication", 32nd Annual ACM-SIAM Symposium on Discrete Algorithms (SODA 2021), arXiv:2010.05846, doi:10.1137/1.9781611976465.32, S2CID 222290442

- ^ Davie, A.M.; Stothers, A.J. (2013), "Improved bound for complexity of matrix multiplication", Proceedings of the Royal Society of Edinburgh, 143A (2): 351–370, doi:10.1017/S0308210511001648, S2CID 113401430

- ^ Vassilevska Williams, Virginia (2014), Breaking the Coppersmith-Winograd barrier: Multiplying matrices in O(n2.373) time

- ^ Le Gall, François (2014), "Powers of tensors and fast matrix multiplication", Proceedings of the 39th International Symposium on Symbolic and Algebraic Computation — ISSAC '14, p. 23, arXiv:1401.7714, Bibcode:2014arXiv1401.7714L, doi:10.1145/2608628.2627493, ISBN 9781450325011, S2CID 353236

- ^ a b Le Gall, François; Urrutia, Floren (2018). "Improved Rectangular Matrix Multiplication using Powers of the Coppersmith-Winograd Tensor". In Czumaj, Artur (ed.). Proceedings of the Twenty-Ninth Annual ACM-SIAM Symposium on Discrete Algorithms. Society for Industrial and Applied Mathematics. doi:10.1137/1.9781611975031.67. ISBN 978-1-61197-503-1. S2CID 33396059.

- ^ Knight, Philip A. (May 1995). "Fast rectangular matrix multiplication and QR decomposition". Linear Algebra and its Applications. 221: 69–81. doi:10.1016/0024-3795(93)00230-w. ISSN 0024-3795.

- ^ Rote, G. (2001). "Division-free algorithms for the determinant and the pfaffian: algebraic and combinatorial approaches" (PDF). Computational discrete mathematics. Springer. pp. 119–135. ISBN 3-540-45506-X.

- ^ Aho, Alfred V.; Hopcroft, John E.; Ullman, Jeffrey D. (1974). "Theorem 6.6". The Design and Analysis of Computer Algorithms. Addison-Wesley. p. 241. ISBN 978-0-201-00029-0.

- ^ Fraleigh, J.B.; Beauregard, R.A. (1987). Linear Algebra (3rd ed.). Addison-Wesley. p. 95. ISBN 978-0-201-15459-7.

- ^ Cohn, Henry; Kleinberg, Robert; Szegedy, Balazs; Umans, Chris (2005). "Group-theoretic Algorithms for Matrix Multiplication". Proceedings of the 46th Annual Symposium on Foundations of Computer Science. IEEE. pp. 379–388. arXiv:math.GR/0511460. doi:10.1109/SFCS.2005.39. ISBN 0-7695-2468-0. S2CID 6429088.

Further reading edit

- Brent, Richard P.; Zimmermann, Paul (2010). Modern Computer Arithmetic. Cambridge University Press. ISBN 978-0-521-19469-3.

- Knuth, Donald Ervin (1997). Seminumerical Algorithms. The Art of Computer Programming. Vol. 2 (3rd ed.). Addison-Wesley. ISBN 978-0-201-89684-8.